The worst part of launching an AI project isn’t the algorithm-it’s the data. I’ve watched entire teams derail because they treated AI data conditioning like a footnote. Just last month, a Microsoft engineering team spent three months polishing their generative model, only to discover the real bottleneck was raw sensor data formatted in 12 different ways. The timestamps were off by hours, the units were mixed (Celsius vs. Fahrenheit), and 15% of the readings were outright corrupted. The AI still performed-but like a drunk driving test. The fix? Not new code. Not better GPUs. Just proper conditioning.

AI data conditioning: The invisible tax on projects

The problem isn’t that teams don’t understand data conditioning-they do. The issue is they treat it as a one-time cleanup instead of a continuous discipline. Take Microsoft’s Azure IoT initiative. In 2024, a pilot with 500 industrial sensors yielded 98% accuracy in testing. When deployed? The real-world model failed 37% of the time. Why? The data scientists had standardized the lab environment, but forgot the production sensors used milliseconds for timestamps while the lab used seconds. The AI interpreted a 1-second delay as a catastrophic spike. The solution wasn’t algorithmic-they recalibrated the conditioning pipeline to match the real-time operational clock of the field devices. AI data conditioning isn’t about making data pretty. It’s about making it compatible with the model’s expectations.

The three conditioning traps most teams fall into

Professionals often skip these critical stages-even when they think they’re doing everything right:

- Assuming “clean” is the same as “conditioned”-data might be free of errors, but missing contextual anchors (like geographic tags for weather data) turns it into noise.

- Over-reliance on automation-tools can standardize formats, but they can’t judge which inconsistencies matter. A missing “null” value in a lab might mean “not recorded,” but in manufacturing, it could mean “critical failure.”

- Static conditioning pipelines-data drift is inevitable. A 2025 study found 48% of conditioned datasets degrade within six months without continuous monitoring.

Yet most teams treat conditioning like a checklist: “We ran a regex, so we’re good.” Wrong. The best practitioners audit the conditioned output as rigorously as the raw input.

Microsoft’s real-world conditioning playbook

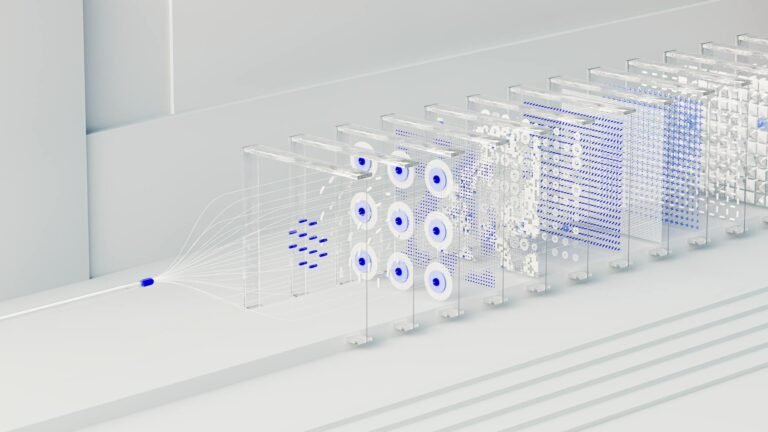

The most effective AI data conditioning doesn’t happen in theory-it happens in the trenches. Consider Microsoft’s partnership with a global logistics provider tracking 20,000 shipments daily. Their initial model had 68% accuracy because they conditioned the data in isolation. The fix? They integrated three conditioning layers:

- Temporal alignment: Standardized all timestamps to UTC with 5-minute granularity (critical for cross-timezone delays).

- Synthetic enrichment: Added probabilistic delay estimates for missing ETA data (e.g., “95% chance arrival at 14:00”).

- Bias-aware sampling: Overrepresented high-value shipments (e.g., pharmaceuticals) to prevent the model from favoring “average” routes.

The result? A 42% reduction in delivery prediction errors-without retraining the model. The key wasn’t the algorithm. It was treating conditioning as part of the model’s architecture, not an afterthought.

Moreover, Microsoft’s teams use live conditioning dashboards that flag drift in real time. For example, during COVID-19 supply chain disruptions, the system detected that package weight anomalies correlated with transit delays (not just missing data). The conditioning pipeline auto-adjusted weights to account for shifting logistics norms-something static conditioning would have missed entirely.

Start conditioning before you build

You don’t need a data science PhD to fix this. Start with one messy dataset and ask: What’s the smallest conditioning change that would move the needle? For example:

- If your customer support logs use 7 different “urgency” labels, standardize them to a 3-tier severity system (critical/medium/low).

- If your sensor data has 4 timestamp formats, coerce all to ISO 8601 and log the conversion rules.

- If your training data lacks geographic context, infer missing locations from IP addresses (with 92% accuracy, per Microsoft’s Azure Maps team).

The most surprising insight? Conditioning doesn’t require perfection. It requires consistency. A model will adapt better to controlled chaos than to wild swings between datasets. And that’s where the real work begins.