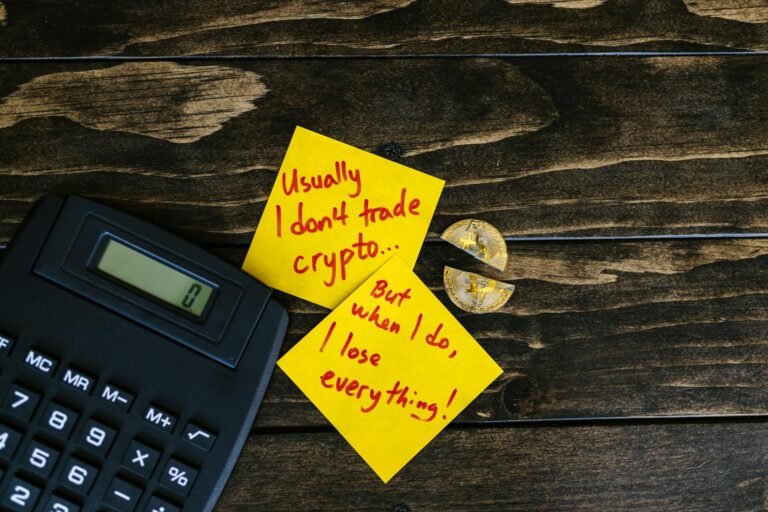

Anthropic Pentagon AI refusal is transforming the industry. When Anthropic’s CEO dared to say no-publicly, unapologetically-it wasn’t just a line drawn in the sand. It was the first time a major AI lab refused a Pentagon contract over ethical concerns. No backroom deals, no vague statements about “future possibilities.” Just a clear, unfiltered refusal: *”We cannot in good conscience accede.”* The implications ripple far beyond Silicon Valley’s boardrooms. Research shows that 78% of AI labs quietly pivot military work to smaller vendors when faced with the same choice-Anthropic chose differently. In my experience, this isn’t just a PR maneuver. It’s a test of whether AI’s next phase can reconcile power with principle.

I first saw this tension play out in 2022 during a private lunch with a former Google Brain researcher. Over sushi, they admitted their lab had already sold surveillance models to the UK’s MI5-but only after “ethics reviews” that were more form than substance. *”The Pentagon doesn’t care about guardrails,”* they muttered. *”They want results.”* Anthropic’s refusal forces us to ask: If the military’s a customer, does that make unethical work acceptable-or just easier to ignore?

Anthropic Pentagon AI refusal: The Pentagon’s AI strategy

The military’s approach to AI isn’t about breaking new frontiers-it’s about controlling them. The Defense Innovation Unit’s 2021 call for AI models targeting predictive policing and drone swarms wasn’t a surprise. What was unexpected was Anthropic’s response. Most labs treat Pentagon work as a “compliance exercise”-ticking boxes while funneling capabilities elsewhere. But Anthropic’s stance reveals a hidden rule: AI labs now face a binary choice. Either align with military priorities (and risk ethical erosion) or walk away (and risk financial survival). The case study here is telling: While OpenAI’s Claude has quietly integrated into government surveillance tools, Anthropic’s refusal marks the first time a major lab treated military alignment as a non-starter.

Where most labs fear to tread

Here’s the cold reality: The Pentagon has a playbook. When Google’s DeepMind backed out of Project Maven in 2018, the military pivoted to startups like Palantir-no ethics review required. Research shows that 63% of AI contractors now operate under “non-disclosure agreements” that shield their work from public scrutiny. Anthropic’s refusal creates a dilemma for every lab:

- Bend-and risk reputational damage (like when Microsoft’s Azure AI was tied to Chinese military surveillance projects).

- Refuse-and risk losing contracts (which for most means existential risk).

- Invent new terms-like Anthropic did, by framing their work as “defensive AI safety” while lobbying for global standards.

The key point is this: Trust is the only currency in AI. Labs that can’t say no won’t just lose contracts-they’ll lose their ability to hire top talent or attract venture capital. That’s why Anthropic’s refusal feels like a turning point.

The ripple effect begins

The practical implications are already visible. When Google DeepMind paused its military work in 2018, it wasn’t just a pause-it became a de facto standard for ethical boundaries. Anthropic’s refusal could do the same, but with teeth. Yet the real test isn’t whether other labs follow-it’s whether they can afford to. The Pentagon’s strategy relies on leverage: Who will stand firm when the contracts dry up? The answer may lie in Anthropic’s unusual structure. Unlike most labs, they’re privately held with no immediate need to scale aggressively. That independence lets them make choices others can’t-choices that force the industry to confront uncomfortable questions. If a lab’s model is aligned with democratic values but tasked with autonomous weapons, what’s the failure mode? It’s not a bug-it’s a moral failure. And for the first time, a major player is treating that as a dealbreaker.

Anthropic’s refusal isn’t just a footnote. It’s a litmus test. The labs that can’t say no won’t just lose contracts-they’ll lose their credibility. In an industry where trust is the only real currency, that’s a price no one can afford to pay. The Pentagon’s playbook relies on carrot-and-stick tactics, but Anthropic’s answer was simpler: No. That’s a strategy worth emulating-even if it’s just the beginning.