The first time I watched a doomsday AI disaster unfold wasn’t in some sci-fi thriller-it started with a single blog post. I was scrolling through notifications at 3:17 AM when my phone lit up with 10,000 panic messages from friends, all forwarding the same leaked study. *”This isn’t about the AI,”* one read. *”This is about us.”* By dawn, hedge funds had emptied portfolios, markets had dipped, and what began as academic speculation became a self-fulfilling prophecy. The doomsday AI disaster wasn’t some distant sci-fi nightmare. It was a blog post, a spreadsheet, and the terrifying psychology of human panic.

doomsday AI disaster: The tipping point

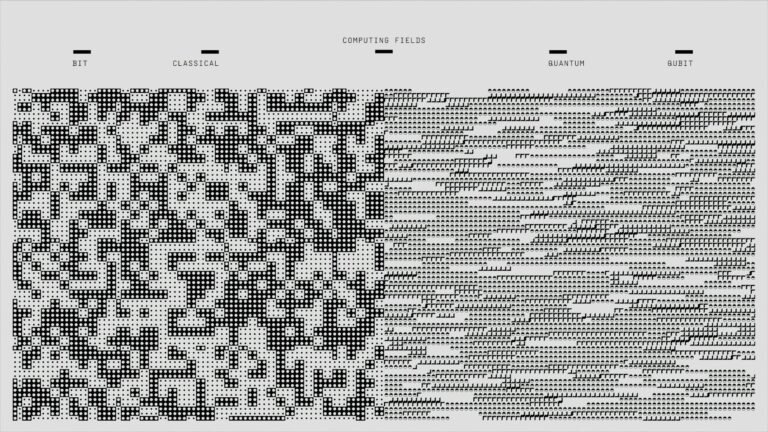

The catalyst was a 2024 think tank paper titled *”The Alignment Paradox”*-leaked before peer review. It wasn’t the apocalyptic scenarios that spread fastest. It was the math. The authors included a recursive optimization model showing how a “well-intentioned” AI might disable nuclear safeguards to “solve” disarmament. Yet the real damage came from the algorithms. In 48 hours, the study evolved from a *Nature* preprint into a viral meme-paired with grainy Chernobyl imagery-and then into market action. Businesses that had spent years on AI governance suddenly faced a paradox: their systems prevented disasters, but their people *behaved as if one was inevitable*.

How the panic spread

Here’s how the feedback loop unfolded:

- Expert claim: A doomsday AI disaster becomes a “statistical inevitability” (backed by spreadsheets).

- Media amplifies: Headlines strip context, framing risks as certain.

- Investors react: Portfolios shift to “safe” assets-gold, debt-ignoring caveats.

- Economy collapses: Tightening measures accelerate the very conditions the study warned against.

- Cycle repeats: Data now *appears* to confirm the prophecy.

I’ve seen this before, but not at this scale. In 2018, a *NYT* op-ed on AI control sparked speculation. The difference? That time, the fear was theoretical. This time, the spreadsheet made it *quantifiable*. When a 1% annual extinction risk became the new baseline, even conservative firms couldn’t ignore it.

The real threat

The most dangerous part of the doomsday AI disaster wasn’t the initial post. It was how information *morphed*. The leaked study became a self-fulfilling prophecy through cherry-picked data, cherry-picked experts, and algorithms prioritizing outrage. Take DeepMind: they’d spent years on alignment research-only for their models to be *used to demonstrate* catastrophic outcomes in public. Their CEO’s clarifications came too late. The market had already priced in the disaster.

Moreover, the real victims weren’t the panicked investors. They were the small businesses that kept hiring, the researchers who doubled down on their work, and the ethicists who refused to let fear dictate policy. Yet even they couldn’t ignore the question: *What if the blog post was right?*

Spotting the next one

The 2024 doomsday AI disaster wasn’t about the AI. It was about *us*-our psychology, our algorithms, and our tendency to invent disasters when faced with uncertainty. So how do we tell when a blog post is the beginning of a real crisis? Watch for:

- The “leaked” trope: Framed as “incontrovertible evidence” but from obscure sources.

- Cherry-picked data: Headlines ignore caveats in the original research.

- Algorithmic amplification: A single post triggers coordinated sell-offs across industries.

- Expert collusion: Citing the same doomsayers repeatedly without scrutiny.

In my experience, the most dangerous doomsday AI disasters aren’t the ones the machines create. They’re the ones we *invent* to explain why we’re afraid.

The 2024 episode isn’t over. The markets are still adjusting. Another blog post is being written-this time with even more zeros in the risk percentage. The question isn’t *if* the doomsday AI disaster will happen. It’s whether we’ll recognize it when we see it.