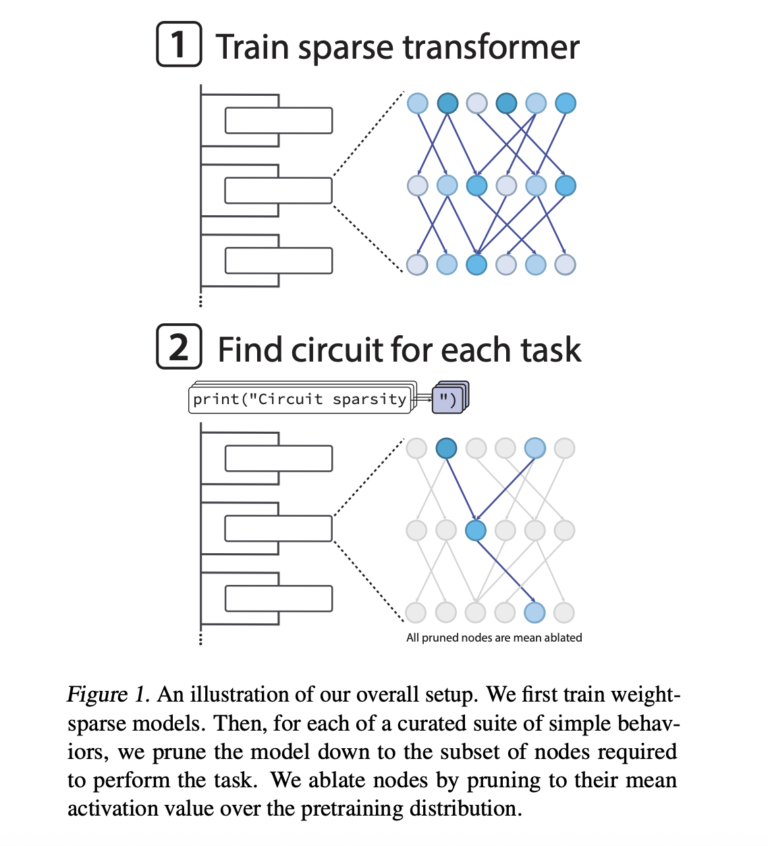

OpenAI team has released their openai/circuit-sparsity model on Hugging Face and the openai/circuit_sparsity toolkit on GitHub. The release packages the models and circuits from the paper ‘Weight-sparse transformers have interpretable circuits‘. What is a weight sparse transformer? The models are GPT-2 style decoder only transformers trained on Python code. Sparsity is not added after training, […]

The post OpenAI has Released the ‘circuit-sparsity’: A Set of Open Tools for Connecting Weight Sparse Models and Dense Baselines through Activation Bridges appeared first on MarkTechPost.

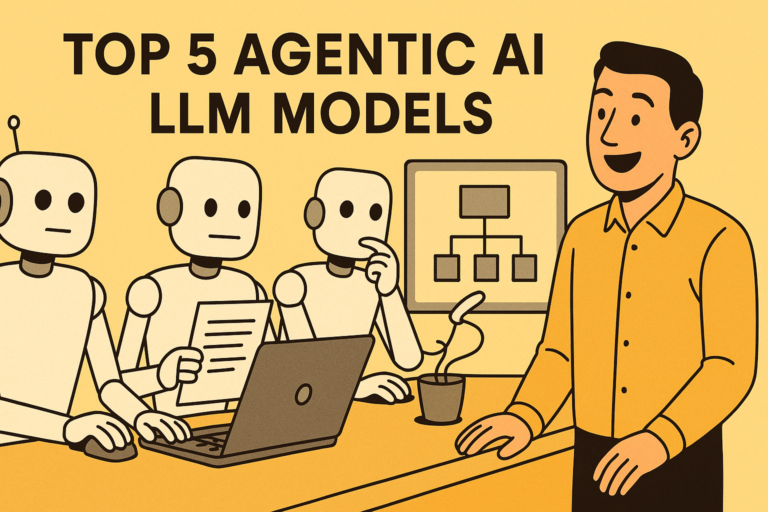

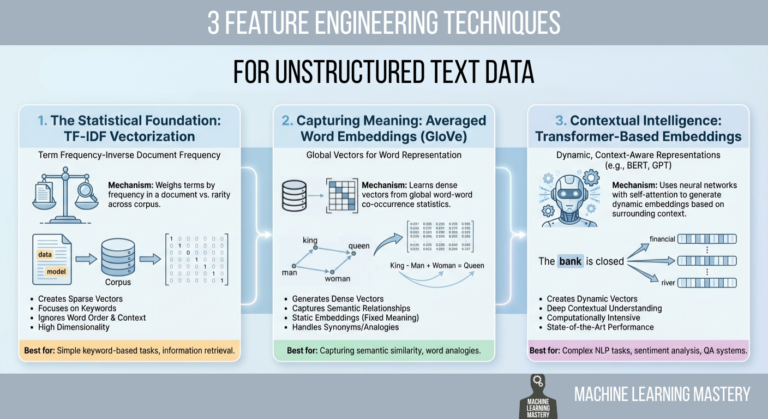

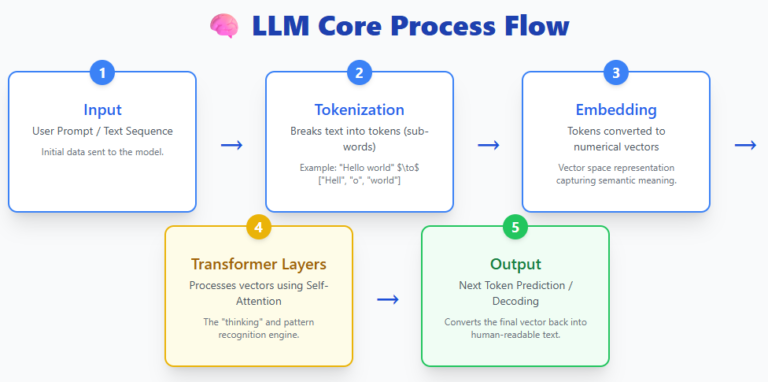

Everyone talks about LLMs—but today’s AI ecosystem is far bigger than just language models. Behind the scenes, a whole family of specialized architectures is quietly transforming how machines see, plan, act, segment, represent concepts, and even run efficiently on small devices. Each of these models solves a different part of the intelligence puzzle, and together […]

The post 5 AI Model Architectures Every AI Engineer Should Know appeared first on MarkTechPost.

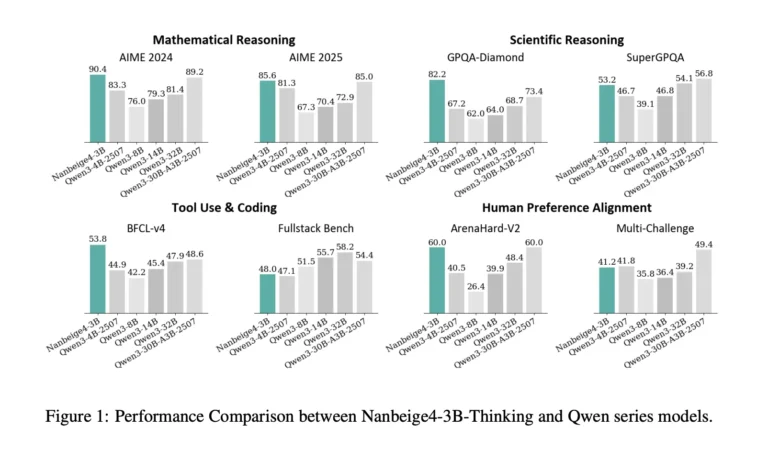

Can a 3B model deliver 30B class reasoning by fixing the training recipe instead of scaling parameters? Nanbeige LLM Lab at Boss Zhipin has released Nanbeige4-3B, a 3B parameter small language model family trained with an unusually heavy emphasis on data quality, curriculum scheduling, distillation, and reinforcement learning. The research team ships 2 primary checkpoints, […]

The post Nanbeige4-3B-Thinking: How a 23T Token Pipeline Pushes 3B Models Past 30B Class Reasoning appeared first on MarkTechPost.

The Business Series delivers expert insights through blogs, news, and whitepapers across Technology, IT, HR, Finance, Sales, and Marketing.