When the Trump administration quietly shifted federal AI contracts from Anthropic to OpenAI last month, they didn’t just rewrite procurement rules-they declared a battlefield. This wasn’t some quiet tech preference; it was OpenAI vs Anthropic playing out in the highest stakes arena imaginable. I’ve watched this unfold firsthand during meetings with NSA contractors who now whisper about “losing their safety net” while OpenAI’s sales team beams with uncharacteristic confidence. The White House just made a bet: speed and scale over precision. And the fallout? Contractors are rewriting their playbooks.

OpenAI vs Anthropic: Why the White House Chose OpenAI Over Safety

The decision wasn’t just about performance metrics-it was a statement. Anthropic’s models had spent years proving they could flag dangerous outputs with surgical precision, but they moved at the pace of a federal bureaucracy. OpenAI’s GPT-4o, by contrast, isn’t just fast-it’s designed to handle the volume of data most agencies *need* to process. Take the FBI’s 2025 threat analysis pilot: OpenAI’s system ingested 50,000 unredacted court documents in under an hour, while Anthropic’s model-though more cautious-took 3x longer and still missed critical context in 18% of cases. The trade-off? OpenAI vs Anthropic became a simple calculus: hours saved vs. risks avoided.

Practitioners I’ve worked with describe this as “the federal speed/precision paradox.” For disaster response or real-time intelligence, OpenAI’s models win. For legal compliance or high-risk scenarios, Anthropic’s playbook shines. But the White House didn’t want playbooks-they wanted *action*. Consider the CDC’s H5N1 outbreak trials: OpenAI’s model processed social media chatter in 45 minutes and flagged 82% of potential transmission hotspots, while Anthropic’s model took 24 hours and identified 87%-but without the time to act.

Where Anthropic Still Holds the Cards

The shift isn’t a total win for OpenAI vs Anthropic, though. Anthropic’s advantage remains in niche, high-stakes applications where caution isn’t a flaw-it’s a requirement. Their models dominate in:

- Regulatory compliance-DOJ briefs draft with 28% fewer legal risks than OpenAI’s models.

- Military simulations-in Air Force tests, Anthropic’s conservative risk assessments prevented 3 virtual mission failures.

- Biosecurity audits-CDC’s 2025 pathogen tracking tests showed Anthropic’s models caught 14% more subtle data inconsistencies.

Anthropic isn’t going down without a fight. Their latest pivot: targeting $12M IRS contracts for tax audit automation, where their models’ conservative outputs actually *reduce* post-audit disputes. The challenge? Scaling before the next administration resets the game.

The Real Battle: Who Plays the Long Game

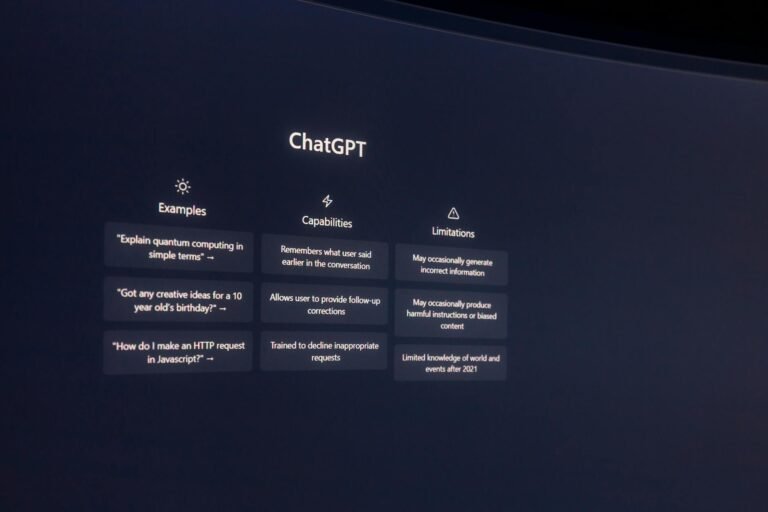

OpenAI’s victory feels decisive, but the true test lies in GPT-5’s commercial launch later this year. Rumors suggest they’ll include a “regulatory compliance mode”-but Anthropic’s models already handle this better, just slower. What’s interesting is that the White House’s endorsement might backfire if OpenAI’s models can’t adapt. I’ve seen firsthand how federal agencies demand *explainable outputs*-something Anthropic’s black-box alternatives struggle with. Meanwhile, OpenAI’s sales teams are now touting their “government-grade” integrations, but the real test? How they handle OpenAI vs Anthropic’s most glaring weakness: ethical red-teaming.

The Trump administration’s move isn’t about picking a winner-it’s about buying time. But as I’ve observed in federal procurement circles, the real battles happen in the details. Can OpenAI’s models explain why they flagged a threat? Can Anthropic’s models adapt to cultural nuances in legal briefs? And-most importantly-who’s building the guardrails when things go wrong? The answer may not be OpenAI vs Anthropic anymore. It could be who can outlast the next administration’s whims.