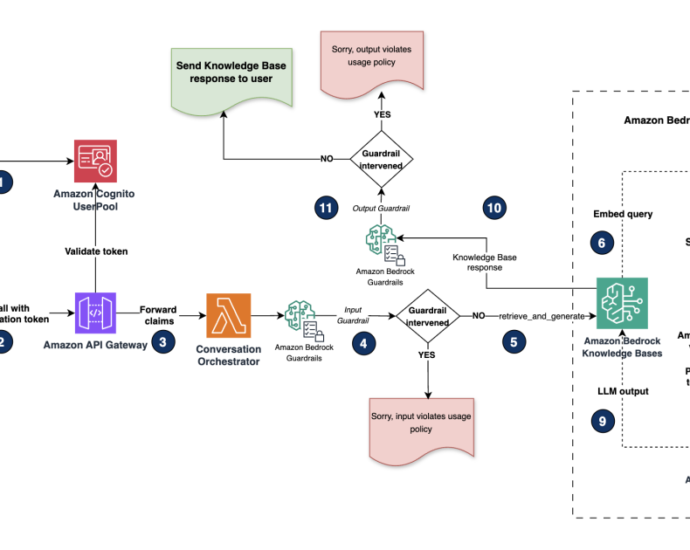

Build scalable containerized RAG based generative AI applications in AWS using Amazon EKS with Amazon Bedrock | Amazon Web Services

Generative artificial intelligence (AI) applications are commonly built using a technique called Retrieval Augmented Generation (RAG) that provides foundation models (FMs) access to additional data they didn’t have during training. This data is used to enrich the generative AI prompt to deliver more context-specific and accurate responses without continuously retrainingContinue Reading