Fine-tune OpenAI GPT-OSS models using Amazon SageMaker HyperPod recipes | Amazon Web Services

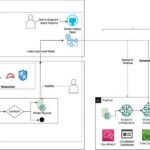

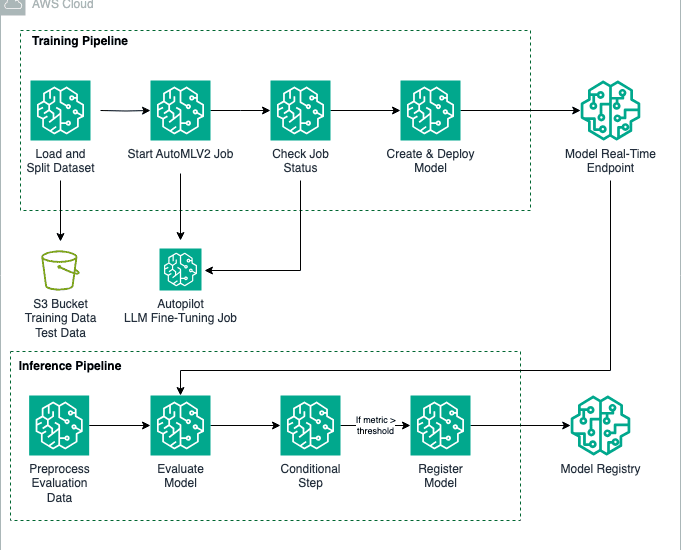

This post is the second part of the GPT-OSS series focusing on model customization with Amazon SageMaker AI. In Part 1, we demonstrated fine-tuning GPT-OSS models using open source Hugging Face libraries with SageMaker training jobs, which supports distributed multi-GPU and multi-node configurations, so you can spin up high-performance clustersContinue Reading