Improve RAG performance using Cohere Rerank | Amazon Web Services

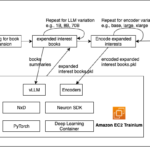

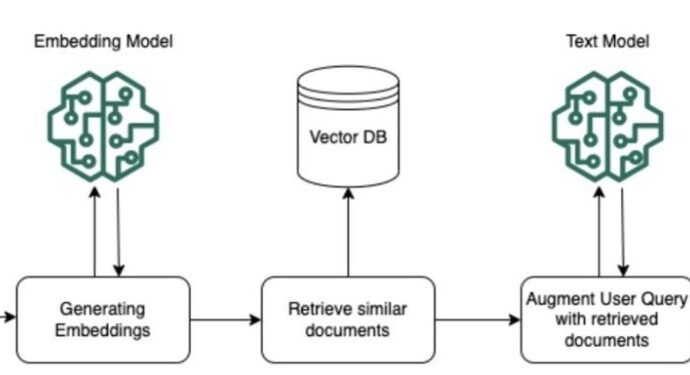

This post is co-written with Pradeep Prabhakaran from Cohere. Retrieval Augmented Generation (RAG) is a powerful technique that can help enterprises develop generative artificial intelligence (AI) apps that integrate real-time data and enable rich, interactive conversations using proprietary data. RAG allows these AI applications to tap into external, reliable sourcesContinue Reading