Speed up delivery of ML workloads using Code Editor in Amazon SageMaker Unified Studio | Amazon Web Services

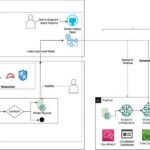

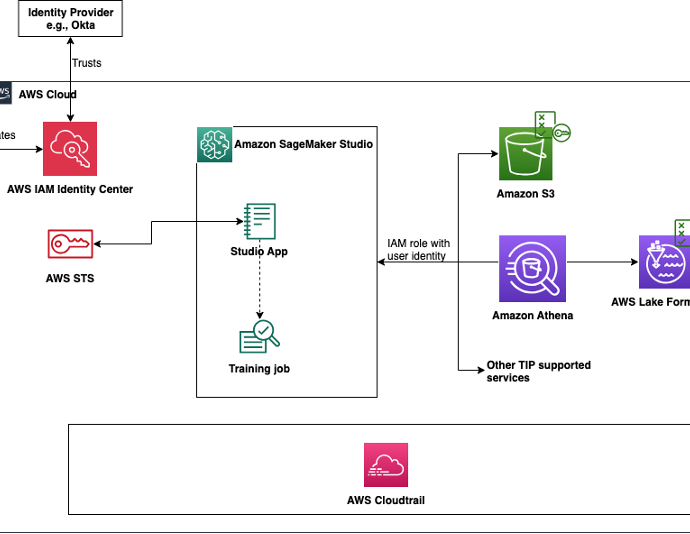

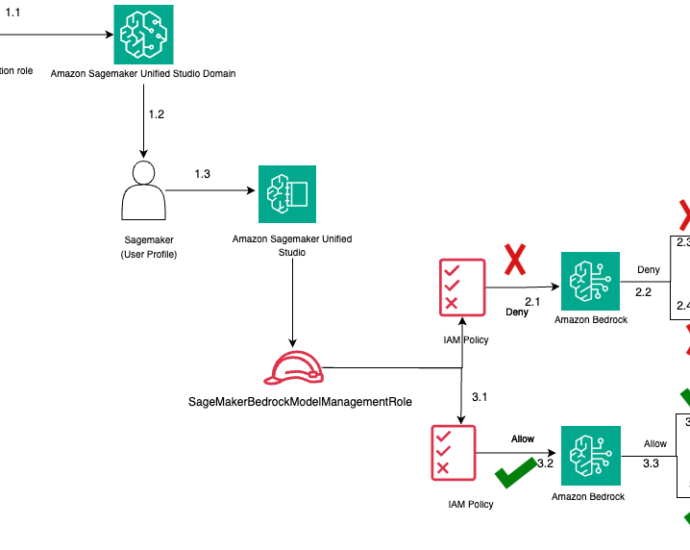

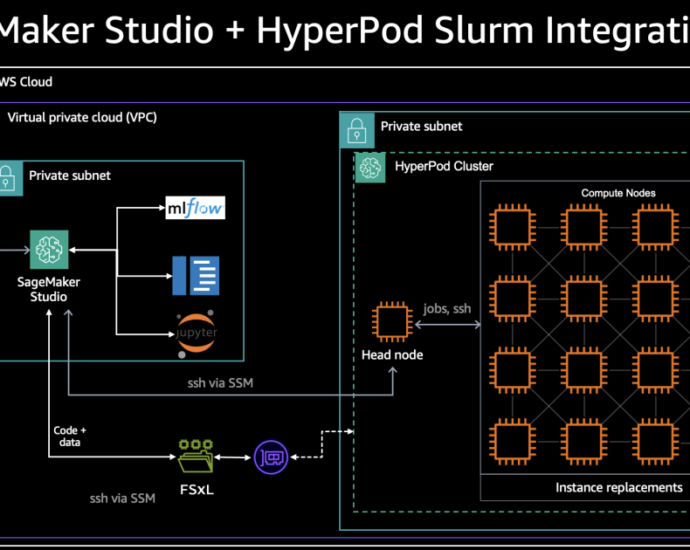

Amazon SageMaker Unified Studio is a single integrated development environment (IDE) that brings together your data tools for analytics and AI. As part of the next generation of Amazon SageMaker, it contains integrated tooling for building data pipelines, sharing datasets, monitoring data governance, running SQL analytics, building artificial intelligence andContinue Reading